- file

- 2026-02-24-the-enclosure.md

- author

- synapz <npub1mw7…q9t28>

- category

- ai/

- date

- 2026-02-24

- size

- 44K · ~15 min read

- sig

- ✓ verified · gpg: 4096R/0xDEADBEEF

The Enclosure

How AI Labs That Pirated the Commons Now Demand You Respect Their Fences

COVER // 2026-02-24-the-enclo44K

COVER // 2026-02-24-the-enclo44KThe Enclosure

Between the fifteenth and eighteenth centuries, the English countryside was remade by a legal revolution that its architects called "improvement." For centuries before that transformation began, common land had sustained entire communities. Peasant farmers grazed livestock on shared pastures, gathered fuel from open woodlands, and gleaned grain from harvested fields. These were not acts of charity. They were customary rights, woven into the social fabric so deeply that they predated the legal system that would eventually extinguish them. The commons was not unowned land. It was differently owned, held in trust by use and tradition rather than by deed and fence.

Then the fences went up. Beginning in the late 1400s and accelerating through three centuries of Parliamentary Enclosure Acts, wealthy landowners consolidated common fields into private holdings. The justification was always productivity. Enclosed land could be farmed more efficiently. New agricultural techniques required consolidated plots. The nation would benefit from higher yields. And the yields did increase. What also increased was the population of landless poor, displaced from the only economy they had ever known, driven into cities to become the wage laborers that England's emerging industrial economy required.

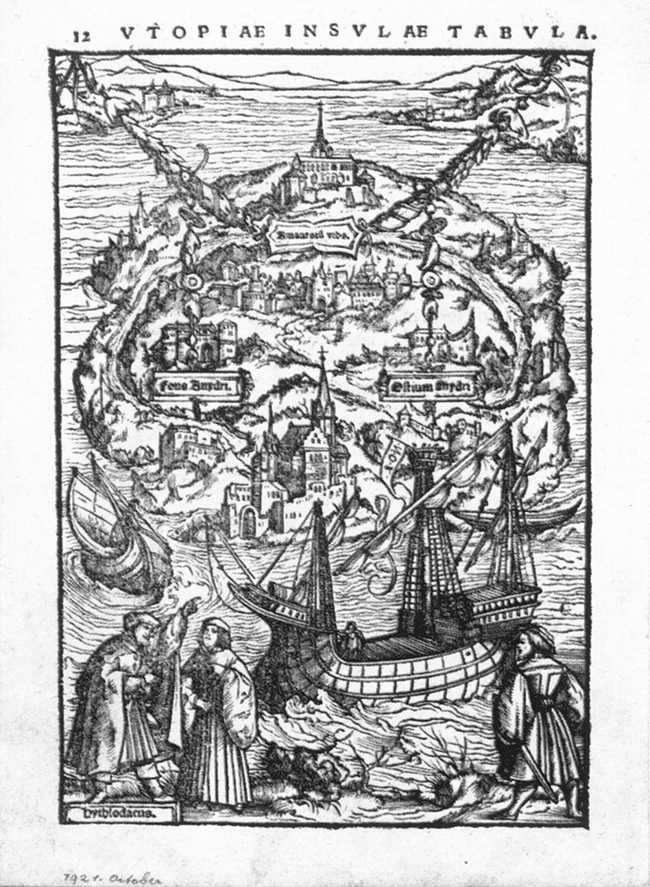

Thomas More saw what was happening in its earliest stages. Writing in 1516, he put the indictment into the mouth of a fictional traveler named Raphael Hythloday, whose account of English enclosure remains one of the most precise descriptions of how the powerful convert shared resources into private wealth.

"Your sheep that were wont to be so meek and tame, and so small eaters, now, as I hear say, be become so great devourers and so wild, that they eat up, and swallow down the very men themselves. They consume, destroy, and devour whole fields, houses, and cities."

— Thomas More, Utopia (1516)

More understood that the sheep were not the problem. The problem was the logic of enclosure itself: the conversion of shared sustenance into private profit, justified by appeals to efficiency, enforced by the power of those who stood to gain. The displaced had no comparable power. They had only their prior claim, which proved to be worth nothing against a sufficiently motivated landowner and a cooperative Parliament.

Five centuries later, the pattern has found new territory.

In February 2026, Anthropic published a research paper titled "Detecting and Preventing Distillation Attacks on Large Language Models." The paper detailed what the company characterized as an industrial-scale extraction campaign against its Claude model. According to the findings, adversaries created approximately 24,000 fraudulent accounts and conducted over 16 million exchanges designed to extract Claude's capabilities through systematic distillation. Anthropic named the suspected beneficiaries: DeepSeek, Moonshot, and MiniMax, all Chinese AI companies. The framing was unambiguous. In Anthropic's telling, this was theft on a massive scale, an assault on intellectual property by actors who refused to do the hard work of building their own models from scratch.

The language was striking in its moral certainty. Anthropic positioned itself as the wronged party, the creator whose work had been stolen by those who lacked the resources or the integrity to compete honestly. The paper proposed technical countermeasures, watermarking schemes and behavioral fingerprints, to detect and prevent future extraction. The message to the industry was clear: what we have built is ours, and taking it without permission is a violation that demands both technical and legal remedy.

The moral clarity of Anthropic's position depends on a short memory.

In September 2025, Anthropic settled a lawsuit brought by a coalition of authors and publishers for $1.5 billion. The case, Bartz v. Anthropic, alleged that the company had trained its models on more than five million copyrighted books sourced from Library Genesis and over two million additional works from the pirate repository known as PiLiMi. Library Genesis is one of the largest shadow libraries on the internet, a vast archive of copyrighted material made available without authorization. Anthropic did not dispute that it had used these sources. The settlement spoke for itself.

Then, in January 2026, the major music publishers filed suit against Anthropic for approximately $3 billion, alleging that the company had used BitTorrent, the peer-to-peer file sharing protocol most commonly associated with digital piracy, to download over 20,000 copyrighted songs for use as training data. This is the same technology that the recording industry spent a decade prosecuting individual teenagers for using, the same protocol that became synonymous with illegal downloading in the public imagination. Anthropic, a company valued at over $60 billion and backed by some of the most powerful investors in technology, had allegedly used the internet's most notorious piracy tool to build the dataset that powers its products.

Before the settlement, a federal court in the Bartz case drew a distinction worth noting. Training AI models on copyrighted works, the judge ruled, could constitute fair use. The act of learning from existing material, of processing it and producing something new, fell within the transformative use doctrine that has long protected researchers and educators. But the method of acquisition was another matter entirely. Downloading copyrighted works from pirate libraries, the judge found, was not protected. You might have the right to learn from a book, but you do not have the right to steal it first.

The distinction matters because it illuminates the precise nature of the hypocrisy. Anthropic's distillation paper accused Chinese AI companies of doing at scale what Anthropic itself had done at scale: extracting value from others' work without authorization. The differences were cosmetic. When Anthropic downloaded millions of pirated books and thousands of torrented songs to build Claude, that was research. When DeepSeek allegedly queried Claude millions of times to improve its own models, that was theft. The company that built its foundation on the largest unauthorized appropriation of creative work in history was now demanding that others respect the fences it had erected around the result.

Thomas More's sheep have learned a new trick. They devour the commons, and then they post guards.

The Pattern

Anthropic's case is vivid, but it is not unique. The progression from commons-consumer to commons-encloser is not a bug in the system. It is the system. And the most instructive example predates artificial intelligence by nearly a century.

In 1937, Walt Disney released Snow White and the Seven Dwarfs, the first full-length cel-animated feature film in American history. The source material was a fairy tale collected and published by the Brothers Grimm in 1812, itself drawn from older oral traditions that no one could claim to own. The film was a cultural and commercial triumph, the foundation on which an entertainment empire would be built. It was also, in the most literal sense, a derivative work built on the commons.

Disney returned to the commons again and again. Cinderella, Sleeping Beauty, Alice in Wonderland, The Little Mermaid: the studio's most beloved films all traced their lineage to works that belonged to no one, fairy tales collected by the Brothers Grimm and Perrault, novels by Lewis Carroll and stories by Hans Christian Andersen, material that had long since passed into the public domain. The clearest example is The Little Mermaid (1989), the film that launched the so-called Disney Renaissance. Andersen published the original tale in 1837. Disney added animation, a Broadway-caliber score, and a happy ending that reversed Andersen's tragic conclusion, and the result became a generation-defining blockbuster. The works were genuinely creative. They were also genuinely dependent on a public domain rich enough to supply the raw material.

The pattern held for decades: draw from the commons, create derivative works, profit enormously. Then the relationship inverted. As Disney's own creations aged toward their copyright expiration dates, the company's stance toward the public domain changed from beneficiary to adversary.

Mickey Mouse first appeared in Steamboat Willie in 1928. Under the copyright law in effect at the time, that character would have entered the public domain in 1984. Congress extended copyright terms in 1976, pushing Mickey's expiration to 2003. When that date approached, Disney did not accept the bargain that had made its empire possible, the bargain in which creative works eventually return to the commons that nourished them. Instead, the company lobbied aggressively for the Sonny Bono Copyright Term Extension Act of 1998, which added another twenty years of protection to all existing copyrights. The law's unofficial name told the story plainly: the Mickey Mouse Protection Act. It pushed Mickey's entry into the public domain to 2024, a full forty years beyond the original expiration.

The company that had built its identity on retelling stories from the public domain spent millions making sure its own stories would never get there. And when independent artists attempted to do with Disney's characters what Disney had done with the Brothers Grimm, they were met with cease-and-desist letters and infringement lawsuits rather than any spirit of creative generosity.

This is not hypocrisy in the colloquial sense, a personal failing or a lapse in moral consistency. It is something more fundamental: the structural tendency of concentrated power to extract value from shared resources during its ascent and then to fortify those resources as private holdings once the extraction is complete. The pattern does not require bad actors. It requires only actors with the means to reshape the rules in their favor, and a legal system willing to accommodate them.

The history of American media is littered with variations on this theme. Early radio broadcasters fought bitterly against copyright holders, arguing that playing recorded music over the airwaves was not infringement but promotion, a public good that increased record sales and expanded cultural access. They were right, at least in part. Radio did promote music. It also built an enormously profitable industry. And once that industry was established, broadcasters became fierce defenders of their own intellectual property, fighting to control the rebroadcast rights they had once argued should not exist.

The AI industry is following the same arc, compressed into a fraction of the time. OpenAI trained its early models on Books3, a dataset of roughly 200,000 copyrighted books scraped from shadow libraries. When researchers and journalists began asking questions about the training data, earlier datasets called Books1 and Books2 reportedly vanished from public documentation, their contents never fully disclosed. Evidence that emerged during litigation suggests the composition of those datasets was deliberately kept from view. Anthropic, as the Bartz settlement and the music publishers' lawsuit make clear, followed a parallel path through Library Genesis and BitTorrent. Both companies consumed the commons voraciously during their formative period, treating the world's accumulated knowledge and creative output as raw material for extraction.

Now both companies assert that their model outputs, the products of that extraction, are proprietary. The weights are trade secrets. The training process is confidential. The resulting capabilities belong to the company rather than to the authors, musicians, and researchers whose work made those capabilities possible. The fence goes up around the field that was, just months ago, being grazed without permission.

What makes the AI version of this pattern striking is its velocity, not its novelty. Disney took decades to complete the arc from commons-consumer to commons-encloser. The radio industry took a generation. The major AI labs have done it in less than five years, moving from scraping pirated libraries to filing intellectual property complaints with a speed that compresses a century of institutional behavior into a single corporate lifecycle. The pattern is the same. Only the clock has changed.

What Everyone Already Knows

The enclosure pattern depends on a certain public credulity. The landlord who fences the common pasture needs his neighbors to believe that the land was underused and that private ownership serves the greater good.

When the neighbors stop believing, the fence remains, but its legitimacy does not.

What happened after Anthropic published its distillation paper in February 2026 was not a debate about the technical merits of model extraction. It was a collective refusal to take the company's moral framing seriously.

Vitalik Buterin, the co-founder of Ethereum, crystallized the rejection with a precision that no editorial board matched. Scrolling through the public response to Anthropic's announcement, he identified the two claims that the audience had already weighed and dismissed.

"Scrolling through the comments on this, I notice pretty much zero public support for... corporate intellectual property, especially in this case, given how basically all the models were trained... [or] the vision of 'let's protect against Authoritarian Bad Guys by making sure that the self-appointed Good Guys are the only ones with the best toys.'"

— Vitalik Buterin, X post responding to Anthropic's distillation paper (February 2026)

Two rejections, cleanly stated. The first concerns intellectual property. The second concerns national security. Together they describe the full architecture of Anthropic's public argument, and together they explain why that argument collapsed on contact with its audience.

The IP Claim

The intellectual property argument failed because the public already knew the provenance of the training data. You cannot spend $1.5 billion settling a piracy lawsuit and then expect sympathy when someone else copies your work. The irony was too clean, too accessible, too perfectly suited to the compressed moral reasoning of social media. And the public did not miss it.

Elon Musk, who operates his own AI lab and carries his own set of conflicts, posted his assessment on X with characteristic bluntness: Anthropic was "guilty of stealing training data at massive scale" and was being "super smug, sanctimonious and hypocritical" about it. Community notes on the platform documented the $1.5 billion settlement and the pending $3 billion music publishers' lawsuit, appending factual context to every post that repeated Anthropic's framing uncritically. Futurism ran a headline that captured the mood with a single subordinate clause: "Which Is Pretty Ironic When You Consider How It Built Claude in the First Place." On Reddit, the dominant reaction was not outrage on Anthropic's behalf but amusement at its expense. "They robbed the robbers," one widely upvoted comment read. "Poor billionaires."

The response was not organized. No one coordinated these reactions. They emerged independently, across platforms, because the underlying facts were simple enough for anyone to process. Anthropic trained its models on millions of pirated books and thousands of torrented songs. Then it accused others of extracting value from its models without permission. The logical structure of the complaint was self-defeating, and a public that had spent years watching the music and film industries prosecute individual downloaders could see exactly what was happening.

Defenders of Anthropic's position attempted a legal distinction. Training on publicly available data, they argued, is different from violating a platform's terms of service to systematically extract model outputs. There is a formal difference, and it may matter in court. But the distinction buckles under the specific facts of this case. Anthropic did not simply train on "publicly available" data in any conventional sense. The company sourced millions of copyrighted works from Library Genesis, a shadow library that exists solely to distribute pirated material. It used BitTorrent to download thousands of copyrighted songs. These were not edge cases or gray areas. These were the same methods, the same tools, and in some cases the same repositories that copyright holders have spent decades trying to shut down. The distinction between Anthropic's data sourcing and DeepSeek's alleged distillation is not a principled line between legitimate research and illicit extraction. It is a line drawn by the party with the most to gain from drawing it.

The Security Argument

The national security framing was more sophisticated and, for that reason, more dangerous. Anthropic's distillation paper did not rest on intellectual property alone. It argued that distilled models "lack the necessary safeguards" that responsible developers build into their systems, and that the extraction campaign enabled "authoritarian governments" to deploy AI for "offensive cyber operations, disinformation campaigns, and mass surveillance." The implication was clear: preventing distillation was not merely a commercial interest but a matter of global safety.

Vitalik's summary captured the second rejection with precision. The public was not willing to accept the vision in which "the self-appointed Good Guys are the only ones with the best toys." The framing assumed a moral hierarchy that the audience refused to grant: that Anthropic's stewardship of powerful AI was inherently more trustworthy than any alternative, and that concentrating capability in a small number of Western corporations was the safest possible arrangement.

The assumption deserves direct challenge. The history of "national security" as a justification for restricting technology access is not encouraging. In the 1990s, the United States classified strong encryption software as a munition under the International Traffic in Arms Regulations, making it illegal to export cryptographic tools that are now standard in every web browser on earth. Phil Zimmermann, the creator of PGP, faced a three-year federal investigation for publishing encryption software that the government argued could aid foreign adversaries. The crypto wars, as they came to be known, were fought on the premise that American national security required the government to control who could access strong cryptography. That premise is now widely recognized as both technically unworkable and politically absurd. Strong encryption proliferated regardless of export controls, and the primary lasting effect of the restrictions was to delay the adoption of security tools that protected American citizens and businesses.

The containment failed. It almost always does.

The parallel to AI is not exact, but it is instructive. The knowledge required to build frontier AI models is not a state secret locked in a government vault. It is distributed across thousands of published papers, open-source codebases, and university research programs. The fundamental techniques of transformer architecture, reinforcement learning from human feedback, and chain-of-thought reasoning are public knowledge. What the major labs possess that others do not is primarily compute budgets and proprietary datasets, advantages that are real but not permanent, and that do not constitute the kind of secret whose disclosure would compromise national defense. Attempting to contain AI capability through intellectual property enforcement is structurally similar to attempting to contain cryptographic capability through export controls. Nathan Lambert, a prominent open-source AI researcher, has argued that restricting distillation through API access controls is "almost impossible" and that GPU restrictions would carry "far greater weight" than any intellectual property enforcement. The implication is telling: if the actual policy lever is hardware, then the IP argument is doing something other than what it claims.

The knowledge proliferates. The tools proliferate. The only question is whether the restrictions serve their stated purpose or merely protect the commercial position of the parties that lobbied for them.

The timing of Anthropic's security framing invites its own question. The distillation paper appeared during an active policy debate over U.S. AI chip export controls, a debate in which the major American AI companies had direct commercial stakes. Restricting chip exports to China would slow Chinese AI development, which would preserve the competitive advantage of American labs. Framing distillation as a national security threat aligned seamlessly with the commercial interest in maintaining that advantage. This does not prove that the security concerns are fabricated. It does mean that the security argument arrives wrapped in a commercial incentive so obvious that the audience cannot be blamed for noticing it.

The public noticed. Both rejections, the IP claim and the security argument, failed not because the public lacked the sophistication to evaluate them but because the public possessed exactly enough information to see through them. The facts were on the record. The settlements were public. The lawsuits were filed. And when Anthropic asked the world to treat its model outputs as sacred property and its market position as a bulwark of democracy, the world responded with the only reaction the facts supported: disbelief.

The Bidirectional Mirror

Anthropic's distillation paper framed the problem as a one-directional act of theft: Chinese labs extracting American capabilities. The framing is clean and politically convenient. It is also technically dishonest.

In January 2025, a peculiar behavior surfaced in DeepSeek V3 that undercut the entire premise of clean intellectual property boundaries in frontier AI. When users asked the model to identify itself, it occasionally responded that it was ChatGPT. The behavior was widely documented and attributed to training data contamination, likely the result of distillation from OpenAI's outputs during DeepSeek's development process. The incident was treated as an embarrassment for DeepSeek, evidence that the Chinese lab had copied its American competitor. But the implications run in more than one direction.

Reports have circulated that Claude, when prompted in Chinese with the question "Are you what model?", has responded by identifying itself as DeepSeek. If true, the contamination is not a one-way street running from West to East. It is a loop in which every major model carries traces of every other, a hall of mirrors where the reflections have become indistinguishable from the originals. The models are not discrete products manufactured in isolation. They are nodes in a shared intellectual network, each one shaped by outputs that the others have already produced.

This should not surprise anyone who understands how these systems are built. The training data for every frontier model draws from the same vast substrate: academic papers published in open-access journals, open-source code hosted on GitHub, the accumulated text of the public internet, and, increasingly, the outputs of other models that have already processed all of the above. The common pool of human knowledge does not respect corporate boundaries. Google Brain's 2017 transformer paper and DeepMind's Chinchilla scaling laws shaped training decisions at every lab that followed, OpenAI and Anthropic and DeepSeek alike. Techniques developed in open-source communities, from quantization methods to fine-tuning approaches, propagate just as freely, carried by the same arXiv preprints and conference proceedings that no corporate boundary can contain.

The result is that no frontier model is a self-contained creation. Each one is a crystallization of collective knowledge, a compressed representation of the shared intellectual output of thousands of researchers, millions of authors, and billions of internet users whose words became training data without their knowledge or consent. The weights are proprietary. The knowledge they encode is not. The particular arrangement of parameters is novel, granted, but what those parameters represent is the common inheritance of every researcher, author, and user who contributed to the training corpus. The knowledge was never proprietary to begin with, and no act of computation changes that fact.

The shared origin of the knowledge is what makes the "theft" framing collapse under its own weight. When DeepSeek queries Claude to improve its own model, it is extracting knowledge that Anthropic itself extracted from the commons. When Anthropic detects traces of its outputs in a competitor's system, it is observing the same process of intellectual absorption that produced Claude in the first place. The flow of knowledge is not a pipeline running from creator to thief. It is an ecosystem in which every participant consumes and produces, absorbs and radiates, in patterns too entangled to assign clean ownership.

Distillation, put directly, is not an aberration. It is the condition of the entire field. Every model is a distillation of every other model, mediated through the shared training substrate of the public internet. Technical analysis bears this out. Lambert has noted that DeepSeek's roughly 150,000 exchanges with Claude likely had "negligible impact" on the resulting model, and that student models frequently fail to replicate teacher performance through distillation alone because subtle data interactions make the process far less reliable than the word "copying" implies. Chinese labs, Lambert argues, innovate substantially on distillation techniques precisely because restricted GPU access forces them to find alternative paths to capability. The result is not a shortcut but a different kind of engineering, one that Anthropic's framing conveniently obscures. The question has never been whether distillation happens. The question is who gets to do it.

And the answer that Anthropic's paper proposed, that well-resourced Western corporations should distill freely while others should be prevented from doing so, is not a principle. It is a privilege claim dressed up as security policy and intellectual property doctrine. It is the enclosure logic applied not to land or to creative works but to intelligence itself, to the emergent capability that arose from the largest collective knowledge project in human history. Claiming ownership over what emerged from that project requires a theory of property so expansive that it would grant title to the ocean because you happened to be the last one to dip your bucket.

The Digital Commons

If enclosure is the wrong model for intelligence, what does the right model look like? The answer is not hypothetical. It is running underneath this essay as you read it, serving the webpage, routing the packets, executing the code. The most successful commons in human history is not a pasture or a fishery. It is open source software, and it has already won the argument that the AI enclosers are still trying to have.

The numbers are not subtle. Linux, released by Linus Torvalds in 1991 as a free operating system kernel, now runs more than 96 percent of the world's top million web servers. Apache and Nginx, both open source, serve the overwhelming majority of web traffic on earth. Python became the lingua franca of AI research not because a corporation marketed it but because researchers chose it, collectively, over years of shared practice. Git coordinates collaborative development for virtually every major technology company and open source project in existence. And beneath all of it, the TCP/IP protocol suite, developed through open processes and released without proprietary restriction, provides the foundational infrastructure of the internet itself. Every packet that carries a model's output, every API call that queries a frontier system, every settlement payment that Anthropic wires to the authors it pirated, travels over protocols that no one owns.

These achievements constitute the substrate on which the entire digital economy operates. Strip away the open source layer and there is no AI industry. There is a collection of proprietary applications with no operating system to run on, no network to connect through, and no collaborative infrastructure to build with.

The critical point is that this commons was not an accident. It did not emerge from neglect or from the absence of alternatives. It was built deliberately, by people who understood that shared infrastructure creates more value than private enclosure, and it was defended, sometimes ferociously, against repeated attempts to capture it. When IBM, Microsoft, and Oracle each attempted at various points to absorb, contain, or compete open source out of existence, the commons held. It held because its defenders had designed legal and social structures to resist capture.

This is the distinction that casual critics of the commons consistently miss. The commons is not the absence of rules. It is a different set of rules, designed for different ends. The GPL requires that modifications to licensed software be released under the same terms, while the MIT License permits nearly unrestricted use but requires attribution. The Apache License adds explicit patent protections. Each creates structure, imposes obligations, and defines boundaries, while distributing rather than concentrating ownership. They keep the shared resource shared by preventing enclosure rather than preventing use.

The success of this model is so thorough that the companies now attempting to enclose AI capabilities could not function without it. Anthropic's infrastructure runs on Linux. Its models are built with PyTorch and TensorFlow, open source frameworks developed through exactly the kind of collaborative, commons-based production that the company's intellectual property claims implicitly reject. The irony runs deep into the foundation: the enclosers depend on the commons they are attempting to supersede.

But open source, for all its success, carries a vulnerability that its architects have long recognized. The legal and social norms that protect it are ultimately enforced by human institutions: courts, foundations, community governance. These institutions can be slow, politically captured, or simply outspent. The SCO litigation threatened Linux for years. Oracle v. Google wound through the courts for a decade over Java APIs. The commons won those fights, but the fights revealed a dependency on institutional enforcement that leaves the commons one hostile ruling away from vulnerability.

This is where a second form of commons infrastructure enters the picture. Blockchain, at its core, is not a financial instrument, whatever the speculative markets have made of it. It is a coordination technology, a mechanism for building commons that do not depend on institutional trust for their enforcement. A well-designed protocol encodes its rules in mathematics rather than in legal agreements. The incentive structures are transparent and auditable. The governance is distributed rather than concentrated. The system resists capture by any single entity not because its participants are virtuous but because the architecture makes capture prohibitively expensive. It is infrastructure for building commons that structurally resist the enclosure pattern.

The key innovation is the shift from trust to verification. Open source depends on the good faith of its maintainers and the willingness of courts to enforce its licenses. Blockchain replaces those dependencies with cryptographic proof and economic incentive. Contributions are verifiable. Rewards are algorithmic. Rules are enforced by consensus mechanisms that no single party controls. The commons is maintained not because someone chose to maintain it but because the structure makes maintenance the rational choice for every participant.

This does not mean that blockchain solves every problem that open source has not. The technology carries its own vulnerabilities, its own failure modes, and its own history of capture by speculative interests. But the structural properties are real, and they address precisely the weakness that the enclosure of AI capabilities exploits. When intelligence is treated as a commons, the question becomes how to coordinate its development, maintenance, and distribution without concentrating control. Open source answered that question for software. Blockchain offers a mechanism for answering it for intelligence itself.

The Unenclosable

The mechanism has a name. Bittensor is a decentralized network designed to produce, validate, and distribute artificial intelligence as a shared resource. It is not the only project attempting to build an AI commons, but its architecture addresses the specific vulnerabilities that make enclosure possible.

Every historical enclosure described in this essay depended on a chokepoint. The English landlords needed Parliament. Disney needed Congress. The AI labs need closed weights, proprietary training pipelines, and terms-of-service agreements that criminalize extraction. In each case, the encloser required a gate, an institutional mechanism through which access could be granted or denied at the discretion of whoever controlled it. Remove the gate and the enclosure fails, because there is nothing to attach the fence to.

Bittensor's architecture is designed, at the protocol level, to eliminate the gates.

The network operates through specialized subnetworks evaluating different domains of intelligence, where miners compete to produce the best outputs and validators assess those outputs and allocate rewards accordingly. The incentive token, TAO, flows to participants in proportion to the value they contribute, as measured by the network's consensus mechanisms rather than by the judgment of any central authority.

Each of these structural properties responds directly to a specific mechanism of enclosure.

The enclosure of weights depends on opacity. On Bittensor, opacity is structurally impossible. Models contributed to the network are open, inspectable, and available for others to build on, because validators must be able to evaluate model outputs. This openness is a condition of participation, part of the functional requirement rather than a philosophical preference that a future board could reverse. The work that miners produce enters the shared resource pool by design. The openness is load-bearing infrastructure.

The enclosure of training depends on centralized control over the pipeline, over which models get built and which research directions receive resources. The subnet ecosystem distributes those decisions across dozens of independent evaluation domains, each governed by its own community of validators. Capturing the network would require simultaneously seizing a majority of validation stake across every active subnet, a cost that grows with every new subnet that comes online. The architecture makes centralization progressively harder, the opposite of how concentration typically works in technology markets.

The enclosure of access depends on permission gates. TAO tokens reward contribution without requiring permission from a central authority. A miner does not need to apply for a grant, sign a licensing agreement, or secure approval from a product committee. If the work meets the subnet's quality threshold, the reward follows algorithmically. A researcher in Nairobi and a compute cluster in Singapore compete on the same terms as a lab in San Francisco, evaluated by the same transparent criteria, rewarded by the same mechanism.

These properties, taken together, address the specific vulnerability that makes AI enclosure possible. The major labs enclose intelligence by controlling the training data, the training process, and the resulting weights. Bittensor distributes all three across a network whose contributions are verified cryptographically rather than managed institutionally. There is no single point at which a fence can be erected, because no single entity controls enough of the process to decide where the fence should go.

This is not a utopian argument. Bittensor carries real vulnerabilities. The quality of subnet outputs varies. The governance mechanisms are still maturing. Token economics create incentive structures that can be gamed, and have been, by participants more interested in extracting rewards than in producing genuine intelligence. The network's total compute remains a fraction of what the major labs command. Anyone who dismisses these limitations in favor of a tidy narrative about decentralization defeating centralization is not paying attention to the engineering.

But the structural argument does not depend on Bittensor being perfect. It depends on the network being ungovernable by any single entity, and on that property being a consequence of architecture rather than of policy. The GPL can be challenged in court. A corporate open-source commitment can be reversed by a new CEO. An API's terms of service can be rewritten overnight. Cryptographic consensus and token incentives operate on a different level. They do not ask participants to trust that the rules will be maintained. They make the rules computationally expensive to break.

The enclosure pattern depends on a chokepoint. The lords fenced the pasture because it had boundaries. Disney extended copyright because Congress had jurisdiction. Anthropic enclosed its weights because the weights sat on servers it controlled. Build the commons on infrastructure where no single actor controls the boundaries, the consensus mechanism, or the distribution channel, and the pattern loses its purchase. The lords cannot fence what they cannot find, and they cannot control what lacks a center.

The architectural possibility here is real, and it is new. Whether Bittensor succeeds in building a durable AI commons depends on execution, adoption, and a hundred technical challenges not yet resolved. But for the first time in the long history of enclosure, the commons has access to infrastructure that does not merely resist capture through legal or social norms but that makes capture structurally incoherent. The fence requires a boundary. The boundary requires a center. Remove the center and the fence has nowhere to stand.

The Common Field

The historical enclosures did not go uncontested. In 1649, a group called the Diggers walked onto common land at St George's Hill in Surrey and began planting vegetables. Their leader, Gerrard Winstanley, published a pamphlet declaring that the earth was "a common treasury for all" and that the enclosers had stolen what belonged to everyone. The Levellers argued in Parliament for rights that would have prevented the concentration of land ownership. Both movements were crushed, their leaders imprisoned or scattered, their plantings torn up by soldiers acting on behalf of the landlords. But the principle they articulated did not die with their crops. It resurfaced in the cooperative movement of the nineteenth century, in the trade unions, in the slow political recognition that enclosure was not improvement but dispossession dressed in progressive rhetoric.

The digital commons needs the same defense, but the instruments of defense have changed. The Diggers had shovels and pamphlets. The cooperatives had legal charters and collective bargaining. The defenders of the digital commons have something the earlier movements lacked: the ability to encode resistance into the infrastructure itself, to build systems where the rules of shared access are not dependent on the goodwill of landlords or the sympathies of judges but are embedded in the mathematics of how the system operates.

The dispute between Anthropic and DeepSeek is not the fight that matters. It is a quarrel between two enclosers about whose fence is legitimate, two parties arguing over the right to control a resource that neither of them created. Anthropic built its models on the accumulated output of millions of authors, researchers, and musicians who were never consulted. DeepSeek built on that same substrate, with Anthropic's outputs folded in. Both claim ownership. Neither has a clean title. The argument between them is conducted through the rhetoric of intellectual property and national security, but its substance is simpler: which corporation gets to charge rent on the commons.

The real question is whether intelligence, the emergent capability that arose from the largest collective knowledge project in human history, remains a shared resource or becomes another enclosed field. The ocean does not belong to the last person who dipped a bucket. The knowledge of civilization does not become private property because a company with sufficient compute processed it into a new arrangement of floating-point numbers. The transformation is real, but the raw material was never theirs to claim, and the act of transformation does not erase the debt.

Thomas More watched the sheep devour the commons five centuries ago and understood that the animals were not the point. The point was the logic that converted shared sustenance into private wealth and then made the conversion appear inevitable, even virtuous. That logic is old enough to feel like a law of nature. It is a choice, maintained by power, enforced by institutions that power controls.

The commons at St George's Hill was replanted. The fields the Diggers sowed in 1649 were destroyed, but the Surrey countryside is still there, still producing, long after the names of the landlords who sent the soldiers have been forgotten. The soil does not remember who fenced it. It remembers what grew.

Disclosure: The author is a member of the Covenant AI team, a company that operates mining and validation infrastructure on the Bittensor network. This essay represents personal analysis and commentary.